The FIFO eviction policy prioritizes incoming addresses over those that have been in cache already. First-In, First-Out (FIFO) Eviction Policy The policies here are (1) FIFO, (2) LRU, (3) LFU, and (4) random. We need to determine who gets kicked out of cache when we’ve exhausted the number of ways. Below are several eviction policies, but it isn’t an exhaustive list. What happens when we’ve exhausted the number of ways that we have? In this case, we must employ an eviction policy. This search takes time, and isn’t free!įully-associative cache is said to have 1-set, m-ways. However, since we no longer have a set function, we have to add logic to search the cache for a given memory address. The number of ways is the number of memory values we can store in that single set.įor the example above, we could easily store 0x0, 0x4, and 0x8 all in cache.

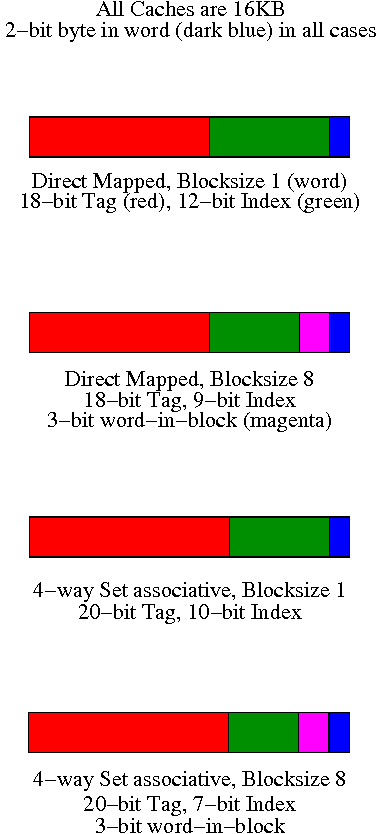

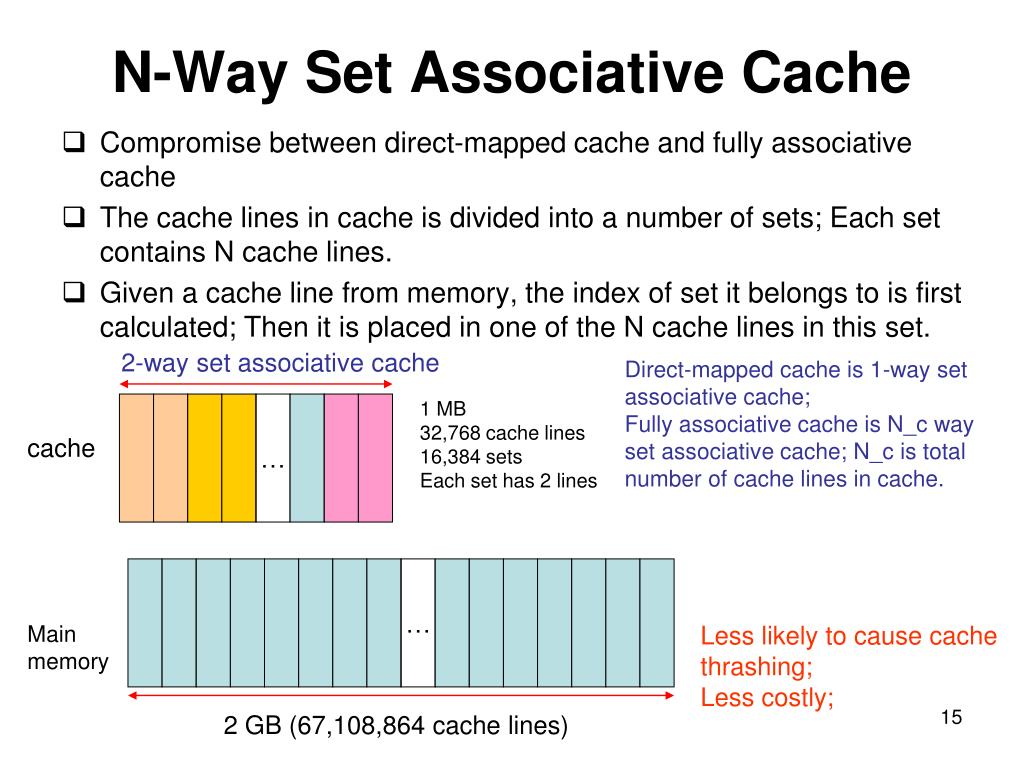

All addresses map to this set, but we divide that set into several ways. Instead of using sets, we can use all of the cache to store whomever gets into cache first. You see how we wasted the other sets in direct-mapped cache. The more sets we have, the larger our cache must be, however the less likely we will have a collision. Recall that the number of sets is determined by the cache designer. This is called evicting the previous address.ĭirect-mapped cache is considered an n-sets, 1-way cache, where n is the number of sets. So, when 0x4 came in, it had to kick out 0x0. Also recall that in direct-mapped cache, only one value may enter the set. Sets 1, 2, and 3 were never used, so we just wasted our time. We read three addresses, but they all went into set 0. Locating an entry in direct-mapped cache is very simple, which is the benefit of direct-mapped cache. The diagram below shows the following addresses entering cache: Recall that 3 is \(11_2\), so with this set function, we can have the possibilities of 0b00, 0b01, 0b10, and 0b11, which equates to set 0, 1, 2, and 3. The chances that you’ll use a value you previously used is high, so therefore we put it in faster memory. Storing the most recent values in cache exploits the principle of temporal locality. This means that not only do I get the value I wanted, but I get all the values around that. Cache also stores a block of data, which can be upwards of 16 bytes or more depending on the cache design.

Temporal and Spatial LocalityĬache operates by storing memory addresses and their values that were accessed most recently. So, now we know how the memory controller treats cache, but how does the cache itself work? There are three types of caches: (1) direct-mapped, (2) set-associative, and (3) fully-associative. Some architectures only have one level of cache, or maybe only two. Obviously, we want to maximize cache hits and minimize cache misses. When the memory controller turns up empty, it is called a cache miss. If the address is not found in level 1 cache, the memory controller then heads off to level 2 cache and looks in there. If the tag is found, then the memory controller grabs the value from level 1 cache, and its job is done. The tag and the set will be used to form a full memory address. The other part of the memory address can be found by the set function itself. It finds what is known as a tag, which is a portion of the memory address used to find it in a table. When the CPU asks for memory to load or store, the memory controller starts with level 1 cache. Everything in level 1 cache is also in level 2 and level 3 and in RAM. Everything in level 2 cache is also in level 3 and in RAM. Cache is interesting because everything in level 3 cache is also in RAM. In this case, level 1 is the fastest, but the smallest memory before we get to the CPU registers. This is the basic building block of multilevel cache. The solution is usually simple: avoid iterating in powers of two, make the last dimensions of multi-dimensional arrays a slightly different size or use any other method to insert “holes” in the memory layout, or create some seemingly random bijection between the array indices and the locations where the data is actually stored.A higher-numbered cache level is closer to RAM, but is slower. Luckily, such issues are more of an anomaly rather than serious problems. When the array size is a multiple of a large power of two, then the indices of the “hottest” elements, the ones we likely request on the first dozen or so iterations, will also be divisible by some large powers of two and map to the same cache line - kicking each other out and causing a ~20% performance decrease.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed